This week the government published its long-awaited statutory report on copyright and artificial intelligence, meeting the 18 March deadline under the Data (Use and Access) Act 2025. The (expected) headline is that the opt-out is dead. The government has confirmed it "no longer has a preferred option" on the question of how AI developers should be permitted to use copyrighted works for training. The CMA also published guidance on agentic AI and consumer law, and Harvey announced an integration with Microsoft 365 Copilot that will put legal AI inside the tools most firms already use.

Government publishes copyright and AI report, drops opt-out

On 18 March 2026, the government published its report on copyright and artificial intelligence alongside an economic impact assessment, fulfilling the requirement under Section 137 of the Data (Use and Access) Act 2025. The report evaluates four policy options: maintaining the status quo, requiring licensing for all AI training uses, introducing a broad data mining exception without rights reservation, and introducing a data mining exception with an opt-out for rightsholders (the government's original preferred approach).

The central finding is that the government has abandoned its preference for Option 3, the opt-out model. The consultation received over 11,500 responses, of which 88% supported mandatory licensing for all AI training uses. Only 3% backed the opt-out. That level of opposition made the original proposal politically untenable, particularly following the House of Lords Communications and Digital Committee's 180-page report on 6 March which called for a licensing-first framework (covered in last week's issue).

The government has not, however, committed to any specific alternative. It says it is "continuing to consider all options," which is a notable step back from the decisiveness that many in the creative industries were hoping for. The report identifies four focus areas for further work: digital replicas (with a consultation planned for summer 2026), labelling of AI-generated content, creator control and transparency, and support for independent creatives. On digital replicas, the government acknowledges these can be harmful when someone's likeness is replicated without permission, and has signalled it may be willing to act, though it stops short of proposing legislation for now.

One concrete proposal in the report is the removal of copyright protection for computer-generated works under Section 9(3) of the Copyright, Designs and Patents Act 1988. This provision, which currently assigns authorship of works generated by a computer "in circumstances such that there is no human author" to the person who made the arrangements for the work's creation, has long been a curiosity of UK copyright law. Its removal would align the UK more closely with jurisdictions that require a human author for copyright to subsist.

For UK lawyers, the practical position is that nothing changes immediately, but the direction of travel is clearer. Firms advising on IP should note that the opt-out model is unlikely to return and that licensing will be central to whatever emerges. Firms advising AI developers need to factor in the probability that the current ambiguity will resolve in favour of rightsholders, not developers.

Takeaways

Act: Read the government's statement of progress and brief clients who are exposed on either side of the copyright and AI question. The creative industries will want to understand the timeline; AI developers will want to assess their licensing position.

Watch: The summer 2026 consultation on digital replicas, and any indication in the upcoming King's Speech as to whether the government will include an AI Bill. The Lords report from last week and this statutory report together has created significant momentum, but legislation remains uncertain.

Risk: The gap between the old preferred option (now abandoned) and whatever replaces it creates a period of regulatory uncertainty. Clients training AI on UK copyrighted content without licences face increasing exposure, even in the absence of new legislation, because the existing copyright framework already provides for enforcement (as the Lords report emphasised).

On your radar

CMA publishes guidance on agentic AI and consumer law: The Competition and Markets Authority published "Agentic AI and consumers" on 9 March 2026, setting out how existing consumer protection law applies to businesses that deploy AI agents (systems that act autonomously on behalf of consumers, such as booking services, making purchases, or managing subscriptions). The CMA warns that AI agents could manipulate consumer choices, push higher-priced products, and serve the interests of the deploying business rather than the consumer. It identifies risks including AI hallucinations leading to costly autonomous errors, and consumers gradually losing the ability to scrutinise automated decisions. The CMA is not proposing new rules, instead arguing that the Digital Markets, Competition and Consumers Act 2024 and the Consumer Rights Act 2015 already apply whether a decision is made by a human or a machine. Why it matters for UK lawyers: firms advising on consumer-facing technology, financial services, or e-commerce should read this guidance carefully. Any client deploying an AI agent that interacts with consumers needs to ensure that the agent's conduct meets the same standard as a human representative. The practical steps the CMA sets out are a useful checklist. (GOV.UK / Lewis Silkin)

Harvey announces Microsoft 365 Copilot integration: Legal AI platform Harvey announced on 4 March that it will integrate directly with Microsoft 365 Copilot, allowing lawyers to access Harvey's legal research and document analysis capabilities from within Word, Outlook, and Teams. Users will be able to @Harvey in Copilot or use the sidebar to ask legal questions, analyse documents, or pull content from their Harvey vaults. The integration also includes an "Agentic Word" capability for multi-step redlining and analysis of lengthy agreements. The initial launch is expected in Q2 2026. Why it matters for UK lawyers: for firms already using both Harvey and Microsoft 365, this reduces the friction of switching between tools. A LegalLeaders study cited by Harvey found that nearly 70% of in-house lawyers spend over an hour each day moving between systems. The integration is worth watching, though it remains to be seen how it performs in practice. (Artificial Lawyer / Legal IT Insider)

Anthropic launches Claude Partner Network, raising questions for legal tech: Anthropic has launched the Claude Partner Network, a programme backed by $100 million in funding to help enterprises adopt Claude, with consulting firms including Deloitte and Accenture among the initial members. The announcement comes weeks after Anthropic's legal plugin launch caused share price drops across legal data companies including RELX, Thomson Reuters, and Wolters Kluwer. Artificial Lawyer has published a thoughtful piece on the tension this creates. Anthropic is simultaneously courting legal tech vendors as partners (Thomson Reuters, LexisNexis) and competing with them by selling directly to law firms and in-house teams through its own products. Why it matters for UK lawyers: the competitive dynamics between foundation model providers and legal tech incumbents are worth understanding if your firm is evaluating AI tools. It is something this author is watching very closely. (Artificial Lawyer)

Bird & Bird lawyer builds BunTool, a free court bundle maker: Tristan Sherliker, a solicitor advocate at Bird & Bird specialising in IP law, has released BunTool, a free desktop application that automates the creation of PDF court bundles. The tool handles pagination, hyperlinking, bookmarking, and formatting, tasks which Sherliker notes can "easily take an experienced lawyer an hour" using standard desktop software. BunTool requires no subscription or online account and runs locally, meaning no documents are uploaded to external servers. It has been used by law firms, barristers' chambers, charities, and litigants in person. Why it matters for UK lawyers: this is a genuinely practical tool for anyone who prepares court bundles, and the fact that it runs offline addresses the confidentiality concerns that attach to cloud-based alternatives. Worth a look, particularly for smaller firms and those doing pro bono work. This author is personally excited to try it and see how it compares to my own firm's bundle tools. (Artificial Lawyer)

Legalweek 2026: AI adoption surges, but "no longer the differentiator": Legalweek 2026 took place in New York from 9 to 12 March, bringing together over 7,000 legal professionals. The headline statistic, from the Relativity and FTI General Counsel Report, is that generative AI adoption among corporate legal departments jumped from 44% to 87% in a single year. However, the conference theme was that AI is now the baseline, not the differentiator. Why it matters for UK lawyers: the adoption numbers are US-focused, but the direction is interesting. The shift from "should we use AI?" to "how do we measure the value of AI?" is one UK firms should expect to follow. (Legal IT Insider / Above the Law)

Ad Break

In order to help cover the running costs of this newsletter, please check out the advert below. In line with my promises from the start, adverts will always be declared and actual products that I have tried, with some brief thoughts from me.

Can this idea actually make money?

The fastest way to find out is simple — launch a newsletter and website in minutes, then turn what you know into something people can buy.

With beehiiv’s Digital Product Suite, your expertise becomes real products: a short guide, a playbook, a set of templates, or limited access to your time. No friction, and no code required. Just create, price it, and share it with your audience.

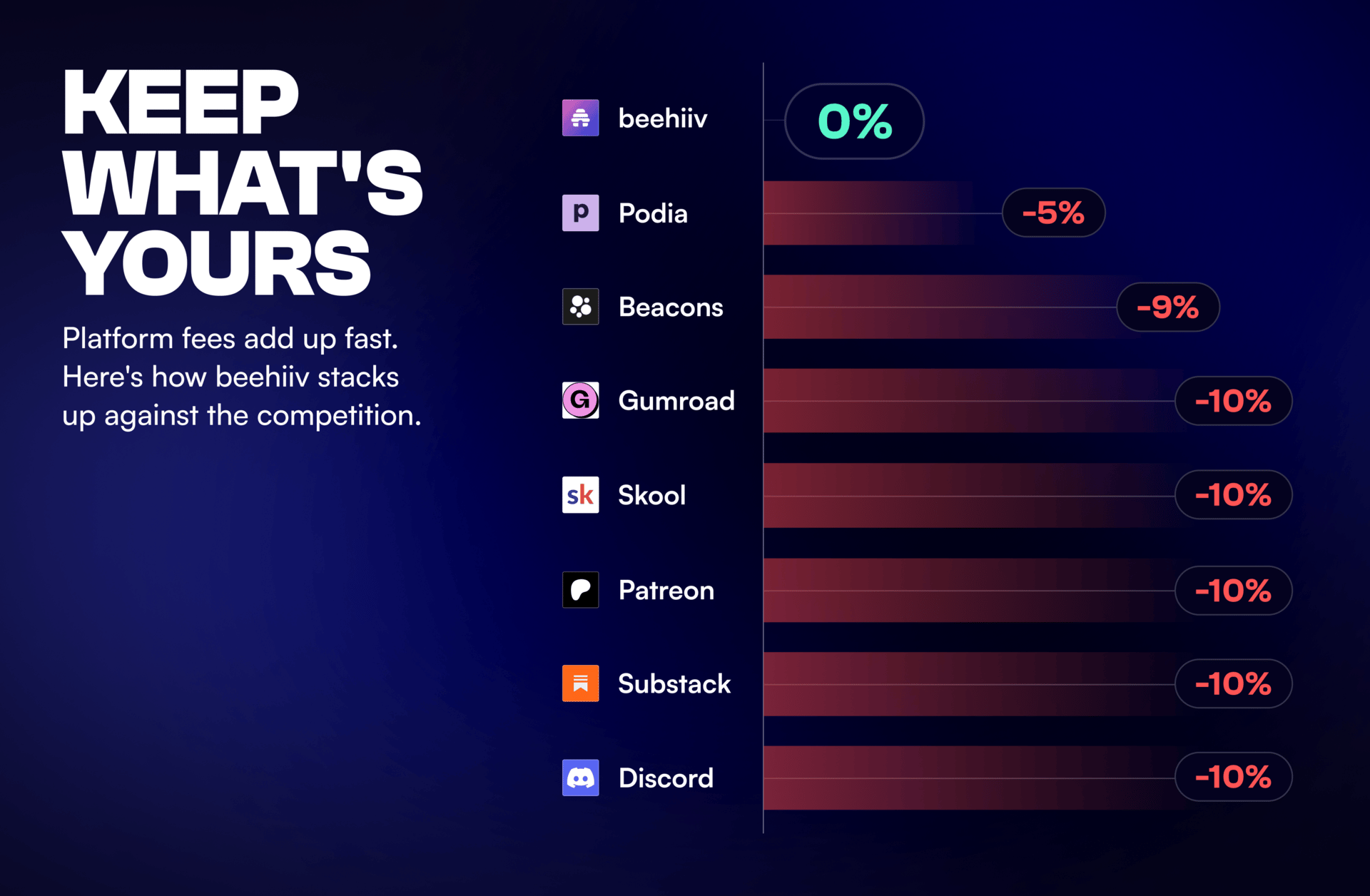

And unlike other platforms that quietly take 5–10% of every sale, beehiiv takes 0%. What you earn is yours to keep.

For a limited time, get 30% off your first 3 months on beehiiv with code PRODUCT30.

For Review

Copyright and artificial intelligence: statement of progress (GOV.UK)

The full statutory report published on 18 March 2026. Covers all four policy options evaluated, the consultation response data (11,500+ responses), the economic impact assessment, and the government's four focus areas for further work. Essential reading for anyone advising on IP, media, technology, or AI development in the UK.

Read: GOV.UK

Agentic AI and consumers (Competition and Markets Authority)

The CMA's research paper and guidance on how consumer protection law applies to businesses deploying AI agents. Covers dark patterns, reliability risks, collusion concerns, and a four-step compliance framework. Practical and clearly written, with relevance for any firm advising on consumer-facing AI deployments.

Read: GOV.UK

AI and Legal Advice Privilege Guide (Simmons & Simmons)

A practical guide and policy framework addressing the risks that AI tools pose to legal professional privilege. The guide explains the distinction between open and closed AI systems, highlights key privilege risks (including the consequences set out in the Munir judgment, covered in this newsletter on 6th March), and provides a pro forma staff policy that organisations can adapt. Useful for any firm or in-house team putting internal AI governance in place.

Read: Simmons & Simmons

"The AI policy pivot signalled by new copyright reports" (Pinsent Masons)

Gill Dennis and Malcolm Dowden analyse the government's copyright report alongside the Lords committee recommendations and recent ministerial statements. A useful secondary source for understanding the direction of UK AI copyright policy, particularly for firms that need to brief clients quickly.

Read: Pinsent Masons

Practice Prompt

Try the below prompt to map your firm's (or your client's) AI copyright exposure in light of this week's government report. Ensure you fill in context and constraints and other aspects marked with {}. Remember to adhere to the Golden Rules and do not upload confidential or privileged information to public tools.

You are acting as an IP risk adviser for a {law firm / in-house legal team / AI developer}.

Context: On 18 March 2026 the UK government published its statutory report on copyright and AI under Section 137 of the Data (Use and Access) Act 2025. The government has abandoned its preferred opt-out model and confirmed it has no preferred option. Mandatory licensing was supported by 88% of consultation respondents. A summer 2026 consultation on digital replicas is planned. The removal of Section 9(3) CDPA (copyright in computer-generated works) has been proposed.

Task: Based on the above, produce a structured risk assessment covering:

1. Training data exposure: Identify which AI tools or models used by {the firm / the client} may rely on copyrighted training data without express licences. Note any tools where the vendor's terms of service address (or fail to address) copyright indemnities.

2. Output risk: Assess whether any AI-generated outputs could engage the proposed removal of Section 9(3) CDPA protection, and what this means for any outputs currently treated as the firm's or client's own copyright works.

3. Digital replicas: Flag any use of AI that generates or manipulates likenesses, voices, or personal data in a way that may fall within the scope of the planned summer consultation.

4. Recommended actions: For each risk identified, propose a concrete next step (e.g., review vendor terms, obtain a licence, implement an internal policy, brief a specific team).

Constraints: {Add any sector-specific constraints, e.g., "We are a media company that commissions AI-generated images" or "We are a law firm using AI for document review only."}

Format: Use a table with columns: Risk area | Current position | Exposure level (low/medium/high) | Recommended action | Timeline.

How did we do?

Hit reply and tell me what you would like covered in future issues or any feedback. We read every email!

Thanks for reading,

Serhan, UK Legal AI Brief

Disclaimer

Guidance and news only. Not legal advice. Always use AI tools safely.

Recommended Newsletters

Below are a few newsletters that I recommend, for various reasons. Check them out!