This week research from Irwin Mitchell reveals that AI-generated legal claims are already landing on the desks of many legal teams, with more than a third of respondents reporting a rise in low-merit, AI-drafted claims. Meanwhile, the UK leads the world in legal AI adoption, Artificial Lawyer examines why legal AI keeps getting context wrong, and Anthropic pushes Claude further into agentic territory.

AI-generated claims are already hitting UK businesses

Research published by Irwin Mitchell this month, based on a survey of more than 80 senior in-house lawyers, has found that 35% of in-house legal teams are seeing an increase in AI-generated claims, particularly from customers. These are not sophisticated pieces of litigation. They are lengthy, highly structured arguments produced by generative AI tools, often lacking substantive merit but sophisticated enough to require detailed review and a formal response.

The practical burden is real. According to Katie Byrne, Head of Commercial Dispute Resolution at Irwin Mitchell, these claims are rarely successful but still impose a material cost, consuming time and budget spend on claims handling. The research also found that 55% of respondents rank data protection and privacy violations as the most significant AI-related litigation risk to their organisation, and 69% report rising cyber insurance premiums, with 67% expanding cover or revisiting liability limits.

This is a development that deserves attention because it represents a shift in how AI affects the legal landscape. Until now, most of the discussion in this newsletter (and elsewhere) has focused on AI as a tool for lawyers, something firms deploy to work more efficiently. This research points to the other side of the equation: AI as a tool used against businesses, generating volume that strains legal teams regardless of the merits.

The parallel with the SRA's forthcoming research (due in April 2026, and noted in this newsletter on 12 March) is worth drawing. The SRA has flagged that roughly a third of the public has used generative AI to help identify legal issues. Some of that usage will be genuinely constructive, helping people articulate genuine grievances more clearly. But some of it will produce exactly what Irwin Mitchell's respondents are seeing, i.e. plausible-sounding claims that take time to deal with and go nowhere.

Read: Irwin Mitchell

On your radar

UK law firms lead the world in AI adoption: The Profitability in Law: Global Report 2026 has found that 31% of UK legal professionals use integrated or legal-specific AI tools daily, the highest rate globally and more than half again the rate seen in Australia and New Zealand (20%). In total, 62% of UK practitioners are active, regular users of integrated AI. The report suggests the UK is not just ahead today but compounding that advantage. Why it matters for UK lawyers: for firms still weighing whether to invest in AI tooling, the data suggests the market has already moved. The risk is no longer being an early adopter but being a late one. (Legal Futures)

Artificial Lawyer: why legal AI keeps getting context wrong: Electra Japonas, writing in Artificial Lawyer, argues that legal AI consistently fails because it lacks the deep organisational, transactional, and market context that lawyers draw on instinctively. Simply uploading contracts is not enough for enterprise teams, which need comprehensive, system-wide data and credible market benchmarks to get reliable outputs. Why it matters for UK lawyers: Firms investing in AI should be thinking as carefully about their data infrastructure as about which tool they subscribe to. (Artificial Lawyer)

Anthropic ships Claude computer agent and Code auto mode: On 24 March, Anthropic announced that Claude can now operate as a computer agent, opening applications, navigating browsers, and completing multi-step tasks on a user's desktop. A separate update to Claude Code introduces "auto mode," which allows the AI to self-check actions for safety without requiring permission for every step. Users can also message Claude from a phone and have it execute tasks on a desktop machine (Dispatch mode). Why it matters for UK lawyers: the move toward agentic AI (where the model acts rather than merely responds) is accelerating. For firms already using Claude, these capabilities are worth testing in controlled settings. The usual caveats apply: any agentic tool that acts on a lawyer's behalf must be supervised, and the privilege and confidentiality considerations set out in the Munir judgment (covered on 6 March) remain directly relevant. (CNBC)

New York considers anti-AI bill, and UK lawyers should pay attention: New York's Senate is advancing a bill (S7263) that would impose liability on AI chatbots that provide "substantive responses, information, or advice" which, if given by a person, would constitute unauthorised practice of law. Artificial Lawyer has published a detailed analysis arguing that the bill, on its broader reading, could prevent anyone who is not a regulated lawyer from using AI tools for anything related to a legal matter, effectively ending self-serve legal AI for businesses and restricting access to justice for consumers. The bill's sponsor, Senator Kristen Gonzalez, has since suggested the intent is narrower (targeting actual impersonation of a licensed professional), but the statutory text remains wide.

The UK is in a very different position, and it is worth understanding why. Under the Legal Services Act 2007, only a narrow set of activities are reserved to authorised persons: exercising rights of audience, conducting litigation, probate activities, and a handful of others. General legal advice, contract drafting, compliance guidance, and most of what AI tools currently do well are unreserved in England. That means, in principle, an AI tool can do these things without a solicitor in the loop, and so can a paralegal, a claims handler, or a member of the public.

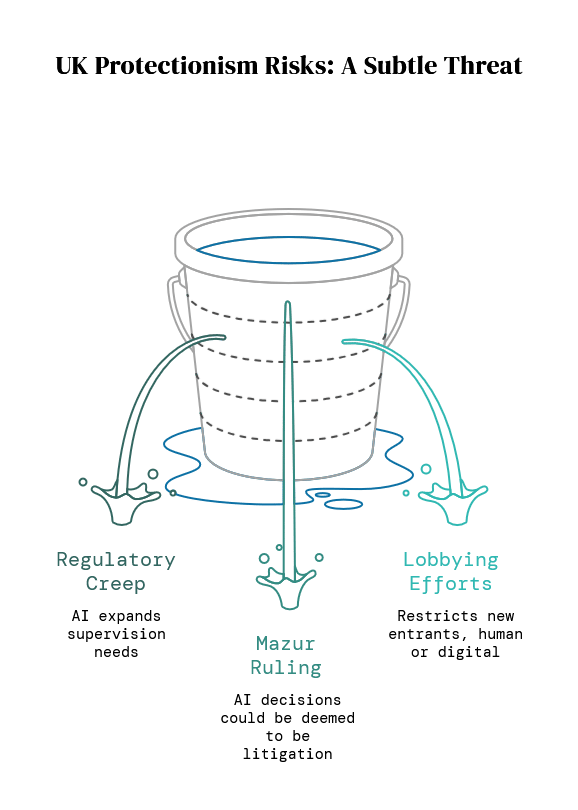

The risk of UK-style protectionism is therefore subtler than a single bill. It could come from three directions in this writer's view:

First, regulatory creep: the SRA or BSB could expand what counts as requiring supervision, particularly as AI tools become more capable and start handling tasks closer to the reserved boundary.

Second, the Mazur ruling (currently on appeal) has already raised the question of whether AI making key decisions in a case could amount to "conducting litigation," which is a reserved activity. If the appeal upholds a broad interpretation, that could draw a bright line around certain AI-driven litigation workflows.

Third, professional body lobbying: there is always debate within the profession for bringing new entrants (whether human or digital) within the regulatory franework, often framed as consumer protection but functioning as market restriction.

This author's view is that the UK's existing framework is, for now, the better approach. The reserved activities model allows innovation in unreserved areas while protecting the public where the stakes are highest. The danger is not that the UK passes a New York-style bill, but that the regulatory perimeter quietly expands through guidance, practice notes, and judicial interpretation until the practical effect is much the same. It is worth watching closely. (Artificial Lawyer / NY Senate Bill S7263)

Ad Break

In order to help cover the running costs of this newsletter, please check out the advert below. In line with my promises from the start, adverts will always be declared and actual products that I have tried, with some brief thoughts from me.

The Gold Standard for AI News

AI keeps coming up at work, but you still don't get it?

That's exactly why 1M+ professionals working at Google, Meta, and OpenAI read Superhuman AI daily.

Here's what you get:

Daily AI news that matters for your career - Filtered from 1000s of sources so you know what affects your industry.

Step-by-step tutorials you can use immediately - Real prompts and workflows that solve actual business problems.

New AI tools tested and reviewed - We try everything to deliver tools that drive real results.

All in just 3 minutes a day

For Review

"Why Legal AI Keeps Getting Context Wrong" (Artificial Lawyer)

Electra Japonas's piece argues that legal AI fails not because the models are weak but because organisations feed them insufficient context. Enterprise legal teams need system-wide data, credible benchmarks, and deep organisational knowledge, not just a handful of uploaded contracts. A worthwhile read for anyone responsible for AI implementation at a firm or in-house team.

Read or listen: Artificial Lawyer

Claude Legal Prompt Shock and LegalOn GPT 5.4 Review (Artificial Lawyer)

Two stories in one. First, a Twitter user shared legal contract prompts for Claude using the names of well-known law firms, sparking debate about whether such prompt engineering produces accurate results (the consensus: treat with caution). Second, a review of OpenAI's GPT 5.4 found a 21% improvement in contract drafting accuracy compared to earlier versions. Useful context for anyone benchmarking AI tools for contract work.

Read or listen: Artificial Lawyer

AI Driving Rise In Low Merit Legal Claims Against UK Businesses (Irwin Mitchell)

The full research findings, based on a survey of over 80 senior in-house lawyers. Covers the rise in AI-generated claims, data protection as the top AI litigation risk, cyber insurance premium trends, and how legal teams are responding. Worth reading in full if you advise commercial clients or run an in-house team.

Read or listen: Irwin Mitchell

Practice Prompt

Try the below prompt to summarise an initial bundle of documents in a litigation matter. Ensure you fill in context and constraints and other aspects marked with {}. Remember to adhere to the Golden Rules and do not upload confidential or privileged information to public tools.

You are a litigation solicitor at a UK law firm. I am going to provide you with an initial bundle of documents relating to a new litigation matter. Please review the documents and produce a structured summary covering:

1. **Parties**: Identify all parties mentioned, their roles, and any relevant relationships.

2. **Chronology**: Produce a date-ordered chronology of key events based on the documents. Use the format: [Date] — [Event] — [Source document]. Where a date is unclear, note it as "[Approximate]" or "[Undated]" and explain why.

3. **Key issues**: Identify the principal legal and factual issues that appear to arise, based only on what the documents say. Do not speculate beyond the material provided. Flag any gaps (e.g., missing correspondence, references to documents not included in the bundle).

4. **Cast list**: List every individual and organisation mentioned, with a one-line note of who they are and how they relate to the matter.

5. **Open questions**: Note any points that are unclear or where further instructions or documents would be needed before advice can be given.

Context:

- Area of law: {e.g., breach of contract, professional negligence, property dispute}

- Client: {name and whether claimant or defendant}

- Stage: {e.g., pre-action, post-Letter of Claim, proceedings issued}

- Any particular focus: {e.g., limitation, causation, quantum, specific disclosure}

Constraints:

- Use UK legal terminology throughout (e.g., "claimant" not "plaintiff", "disclosure" not "discovery").

- Do not provide legal advice. This is a factual summary to support the supervising solicitor's review.

- Where a document is ambiguous, flag the ambiguity rather than resolving it.

- Keep the chronology concise. Each entry should be one sentence.

How did we do?

Hit reply and tell me what you would like covered in future issues or any feedback. We read every email!

Thanks for reading,

Serhan, UK Legal AI Brief

Disclaimer

Guidance and news only. Not legal advice. Always use AI tools safely.

Recommended Newsletters

Below are a few newsletters that I recommend, for various reasons. Check them out!